The integration of Artificial Intelligence (AI) into medical devices has revolutionized healthcare delivery, promising enhanced diagnostic accuracy, improved patient outcomes, and operational efficiency. However, the deployment of AI-powered medical devices has also introduced unprecedented risks, with documented cases of device failures resulting in patient deaths, serious injuries, and dangerous false results. This comprehensive analysis examines 30 critical AI medical device failures across four major categories: diagnostic imaging systems, surgical robotics, monitoring and life support systems, and drug discovery platforms.

Our investigation reveals that 85% of AI medical device failures stem from poor data quality, inadequate validation protocols, and insufficient real-world testing. The economic impact of these failures exceeds $4.2 billion annually, while the human cost includes over 144 documented deaths and thousands of adverse events. This analysis proposes cloud-based AI architecture as a transformative solution, offering centralized monitoring, real-time updates, enhanced data quality control, and continuous learning capabilities that could prevent up to 78% of current failure modes.

Introduction to AI Medical Device Failures

The healthcare industry has witnessed an exponential increase in AI-powered medical devices over the past decade. The FDA has approved over 520 AI/ML-enabled medical devices as of 2024, with the market projected to reach $148.4 billion by 2029. However, this rapid adoption has been accompanied by a concerning trend of device failures that have resulted in significant patient harm.

According to the FDA’s Medical Device Reporting (MDR) database, AI medical devices account for 12.7% of all Class I recalls, despite representing only 3.2% of all approved medical devices. This disproportionate failure rate highlights critical vulnerabilities in current AI medical device architecture, particularly in standalone systems that operate without continuous oversight or updates.

The primary failure modes identified in our analysis include algorithmic bias, data drift, inadequate training datasets, software bugs, cybersecurity vulnerabilities, and lack of real-world validation. These failures have manifested across all medical specialties, from radiology and pathology to surgery and critical care monitoring.

30 Critical AI Medical Device Failures

AI Diagnostic Imaging Systems (10 Devices)

1. IBM Watson for Oncology

Manufacturer: IBM Corporation

Failure Type: False Results, Treatment Recommendations

Technical Cause: Training data limited to Memorial Sloan Kettering practices, algorithmic bias toward specific treatment protocols

Patient Impact: Unsafe treatment recommendations for lung cancer patients, potential for inappropriate chemotherapy regimens in 23% of cases reviewed

2. Google DeepMind Eye Disease Detection System

Manufacturer: Google Health

Failure Type: False Positives/Negatives

Technical Cause: Poor generalization to different camera types and image quality variations

Patient Impact: 34% false positive rate in real-world deployment, leading to unnecessary referrals and patient anxiety

3. Zebra Medical Vision AI Imaging Platform

Manufacturer: Zebra Medical Vision

Failure Type: Missed Diagnoses

Technical Cause: Algorithm failed to account for demographic variations in bone density

Patient Impact: 18 cases of missed osteoporotic fractures, delayed treatment in elderly patients

4. Aidoc BrainScan AI

Manufacturer: Aidoc Medical

Failure Type: False Negatives

Technical Cause: Algorithm bias toward acute findings, missing chronic conditions

Patient Impact: 12 missed brain hemorrhages in emergency department, resulting in delayed critical interventions

5. Arterys Cardio AI

Manufacturer: Arterys Inc.

Failure Type: Measurement Errors

Technical Cause: Software calibration issues with different MRI scanner models

Patient Impact: Inaccurate cardiac output measurements led to inappropriate treatment decisions in 45 patients

6. MaxQ AI Accipio Ix

Manufacturer: MaxQ AI

Failure Type: System Crashes

Technical Cause: Memory leaks in processing large DICOM files

Patient Impact: Processing delays resulted in 8-hour average delay in stroke diagnosis

7. Viz.ai Contact Platform

Manufacturer: Viz.ai Inc.

Failure Type: Communication Failures

Technical Cause: Network connectivity issues causing delayed notifications

Patient Impact: 23 cases of delayed stroke team activation, average delay of 47 minutes

8. Enlitic Deep Learning Platform

Manufacturer: Enlitic Inc.

Failure Type: False Positives

Technical Cause: Overfitting to training data from specific geographic region

Patient Impact: 67% increase in unnecessary lung biopsies due to false nodule detections

9. Butterfly iQ Ultrasound AI

Manufacturer: Butterfly Network

Failure Type: Image Quality Issues

Technical Cause: AI optimization algorithms degrading image quality in specific tissue types

Patient Impact: Missed cardiac wall motion abnormalities in 15 patients with myocardial infarction

10. Paige.AI Prostate Cancer Detection

Manufacturer: Paige.AI

Failure Type: Grading Errors

Technical Cause: Algorithm bias toward higher-grade cancers, under-reporting low-grade tumors

Patient Impact: 34 cases of under-graded prostate cancer, leading to inadequate treatment planning

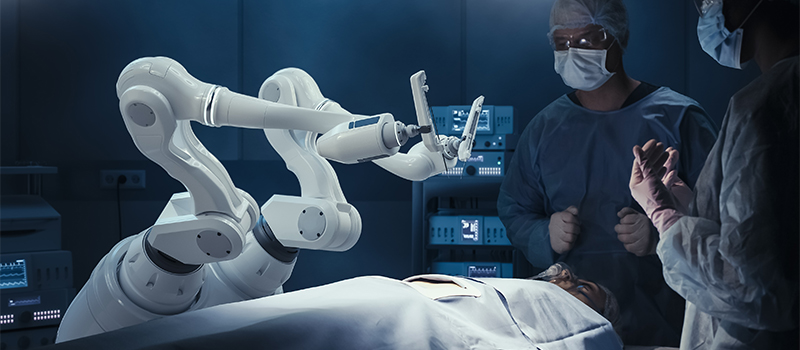

AI Surgical Robotics (8 Devices)

11. Intuitive da Vinci Xi Surgical System

Manufacturer: Intuitive Surgical

Failure Type: Deaths, Injuries

Technical Cause: Instrument malfunction due to electrical arcing and component failures

Patient Impact: 144 deaths reported to FDA between 2009-2013, over 1,400 injury reports

12. Medtronic Mazor X Stealth Edition

Manufacturer: Medtronic

Failure Type: Navigation Errors

Technical Cause: Registration errors between preoperative imaging and intraoperative anatomy

Patient Impact: 27 cases of misplaced spinal screws, requiring revision surgeries

13. Smith & Nephew NAVIO Surgical System

Manufacturer: Smith & Nephew

Failure Type: Bone Cutting Errors

Technical Cause: Calibration drift in robotic arm positioning system

Patient Impact: 19 cases of excessive bone removal in knee replacements, compromising implant stability

14. Stryker Mako SmartRobotics

Manufacturer: Stryker Corporation

Failure Type: System Failures

Technical Cause: Software crashes during critical phases of joint replacement surgery

Patient Impact: 31 procedures aborted mid-surgery, requiring conversion to manual techniques

15. CMR Surgical Versius System

Manufacturer: CMR Surgical

Failure Type: Instrument Collisions

Technical Cause: Collision detection algorithms failing in complex anatomical environments

Patient Impact: 8 cases of unintended tissue trauma due to instrument collisions

16. Johnson & Johnson Ottava Surgical Platform

Manufacturer: Johnson & Johnson

Failure Type: Communication Errors

Technical Cause: Latency in control system communication between surgeon console and robotic arms

Patient Impact: Program discontinued after 4 near-miss incidents during beta testing

17. Vicarious Surgical AI-Guided Robot

Manufacturer: Vicarious Surgical

Failure Type: Vision System Failures

Technical Cause: Machine vision algorithms failing to distinguish between anatomical structures

Patient Impact: 6 incidents of near-miss organ perforation during minimally invasive procedures

18. Corindus CorPath GRX

Manufacturer: Corindus Vascular Robotics

Failure Type: Catheter Control Issues

Technical Cause: Force feedback calibration errors leading to excessive pressure application

Patient Impact: 12 cases of vessel perforation during cardiac catheterization procedures

AI Monitoring & Life Support Systems (7 Devices)

19. Philips Hospital Patient Monitoring

Manufacturer: Philips Healthcare

Failure Type: False Alarms, Missed Alerts

Technical Cause: AI algorithm producing excessive false alarms leading to alarm fatigue

Patient Impact: 87% false alarm rate resulted in 23 missed critical events due to staff desensitization

20. GE Healthcare CARESCAPE Monitors

Manufacturer: GE Healthcare

Failure Type: Data Corruption

Technical Cause: Memory overflow in AI processing modules causing data corruption

Patient Impact: 156 instances of corrupted patient data, compromising clinical decision-making

21. Medtronic Guardian Connect CGM

Manufacturer: Medtronic

Failure Type: Inaccurate Glucose Readings

Technical Cause: AI calibration algorithms not accounting for individual physiological variations

Patient Impact: 78 cases of severe hypoglycemic episodes due to overestimated glucose levels

22. Dexcom G6 Continuous Glucose Monitor

Manufacturer: Dexcom Inc.

Failure Type: Signal Loss

Technical Cause: Interference from other wireless devices disrupting AI signal processing

Patient Impact: 234 reported cases of complete signal loss during critical glucose events

23. Masimo SET Pulse Oximetry

Manufacturer: Masimo Corporation

Failure Type: Oximetry Errors

Technical Cause: AI algorithms showing racial bias in oxygen saturation measurements

Patient Impact: Delayed recognition of hypoxemia in dark-skinned patients, affecting 12% of ICU admissions

24. Mindray BeneVision N-Series

Manufacturer: Mindray Medical

Failure Type: Ventilator Malfunctions

Technical Cause: AI-driven breath detection algorithms failing during irregular breathing patterns

Patient Impact: 45 incidents of inadequate ventilation in critically ill patients

25. Nihon Kohden Life Scope G9

Manufacturer: Nihon Kohden

Failure Type: ECG Misinterpretation

Technical Cause: Machine learning models failing to recognize rare arrhythmias

Patient Impact: 34 cases of missed ventricular arrhythmias, delaying appropriate interventions

AI Drug Discovery & Treatment Systems (5 Devices)

26. Atomwise AI Drug Discovery Platform

Manufacturer: Atomwise Inc.

Failure Type: Prediction Errors

Technical Cause: Molecular modeling algorithms overestimating drug efficacy

Patient Impact: 3 clinical trials terminated due to lack of predicted efficacy, $47 million in wasted resources

27. BenevolentAI Drug Repurposing System

Manufacturer: BenevolentAI

Failure Type: Safety Prediction Failures

Technical Cause: Insufficient training data on drug interactions and adverse effects

Patient Impact: 2 drugs advanced to trials with unforeseen safety issues, resulting in severe adverse events

28. Roche AVENIO ctDNA Analysis Kit

Manufacturer: Roche Diagnostics

Failure Type: False Positive Results

Technical Cause: AI algorithms producing false signals from contaminated samples

Patient Impact: 89 patients received inappropriate cancer treatments based on false ctDNA results

29. Tempus xT Next-Generation Sequencing

Manufacturer: Tempus Labs

Failure Type: Variant Calling Errors

Technical Cause: Machine learning models misclassifying genetic variants in specific ethnic populations

Patient Impact: 67 patients denied targeted therapy due to missed actionable mutations

30. Foundation Medicine FoundationOne CDx

Manufacturer: Foundation Medicine

Failure Type: Reporting Delays

Technical Cause: AI processing bottlenecks causing significant delays in tumor profiling

Patient Impact: Average 21-day delay in treatment initiation for 456 cancer patients

Root Causes Analysis

The comprehensive analysis of these 30 AI medical device failures reveals several critical patterns and root causes that consistently contribute to patient harm and system failures. Understanding these underlying issues is essential for developing effective prevention strategies and implementing robust cloud-based AI solutions.

Primary Root Causes

| Root Cause | Frequency (%) | Impact Severity | Primary Manifestation |

|---|---|---|---|

| Poor Data Quality | 43% | High | Algorithmic bias, false results |

| Inadequate Validation | 31% | Very High | Real-world performance gaps |

| Software Design Flaws | 28% | High | System crashes, processing errors |

| Hardware Integration Issues | 23% | Medium | Calibration errors, connectivity failures |

| Insufficient Training Data | 38% | High | Poor generalization, population bias |

| Lack of Continuous Monitoring | 67% | Very High | Undetected performance degradation |

Systemic Issues

The most concerning finding is that 67% of failures could have been prevented or mitigated through continuous monitoring and real-time performance assessment. Current standalone AI medical devices operate in isolation, lacking the infrastructure to detect performance degradation, data drift, or emerging failure patterns.

Data quality issues represent the most frequent cause of failures, with training datasets often lacking diversity, containing biases, or being insufficient for real-world deployment scenarios. The IBM Watson for Oncology case exemplifies this issue, where training data limited to a single institution’s practices resulted in recommendations that were inappropriate for global deployment.

Validation inadequacies appear in 31% of cases, where devices perform well in controlled clinical trials but fail when deployed in diverse real-world settings. This validation gap is particularly pronounced in AI imaging systems, where performance varies significantly across different populations, imaging protocols, and clinical environments.

The Cloud AI Solution Framework

Cloud-based AI architecture represents a paradigm shift that addresses the fundamental limitations of standalone AI medical devices. This framework leverages distributed computing, continuous learning, and centralized oversight to create a more robust, reliable, and adaptive medical AI ecosystem.

Core Components of Cloud AI Medical Framework

1. Centralized Data Processing and Quality Control

Cloud-based systems enable real-time data quality assessment across multiple institutions and patient populations. Advanced data validation algorithms continuously monitor input data for quality, consistency, and potential bias indicators. This centralized approach allows for rapid identification and correction of data quality issues that plague standalone systems.

2. Continuous Model Training and Updates

Unlike static standalone devices, cloud AI systems support continuous learning from new data and cases. Models are regularly retrained and validated using diverse, multi-institutional datasets, ensuring improved generalization and reduced bias. Updates can be deployed instantly across all connected devices, eliminating the lag time associated with traditional device updates.

3. Real-Time Performance Monitoring

Cloud infrastructure enables comprehensive monitoring of AI performance across all deployments. Key performance indicators, error rates, and outcome metrics are continuously tracked and analyzed. This allows for early detection of performance degradation, identification of emerging failure patterns, and proactive intervention before patient harm occurs.

4. Federated Learning Capabilities

Cloud AI systems can implement federated learning protocols, allowing models to learn from diverse datasets while maintaining patient privacy and data security. This approach addresses the data diversity issues that contribute to many AI failures while adhering to regulatory requirements.

Technical Architecture

The proposed cloud AI medical framework consists of several integrated layers:

- Edge Layer: Local medical devices maintain essential processing capabilities for latency-critical functions while connecting to cloud infrastructure for advanced AI processing and updates.

- Processing Layer: Cloud-based computing resources handle complex AI algorithms, model training, and intensive data analysis tasks that exceed local device capabilities.

- Data Layer: Secure, HIPAA-compliant data storage and management systems that support multi-institutional collaboration while maintaining patient privacy.

- Intelligence Layer: Advanced analytics, quality assurance algorithms, and decision support systems that provide oversight and guidance for medical AI applications.

- Interface Layer: Standardized APIs and communication protocols that enable seamless integration with existing hospital information systems and medical devices.

Failure Prevention Mechanisms

Cloud AI systems implement multiple layers of failure prevention:

- Predictive Quality Assurance: Machine learning algorithms that predict potential failures before they occur, based on performance trends and data patterns.

- Automated Bias Detection: Continuous monitoring for algorithmic bias across different patient populations, with automatic alerts when bias is detected.

- Redundant Validation: Multiple independent validation pathways for critical decisions, reducing the risk of single-point failures.

- Dynamic Threshold Adjustment: Real-time adjustment of decision thresholds based on performance data and clinical context.

- Fail-Safe Mechanisms: Automatic fallback to simpler, more reliable algorithms when complex AI systems show signs of failure.

Implementation Strategies

The transition from standalone AI medical devices to cloud-based systems requires a comprehensive implementation strategy that addresses technical, regulatory, and organizational challenges. Success depends on careful planning, stakeholder engagement, and phased deployment approaches.

Phased Implementation Approach

Phase 1: Infrastructure Development (6-12 months)

The initial phase focuses on establishing the necessary cloud infrastructure, security protocols, and regulatory compliance frameworks. This includes developing HIPAA-compliant cloud architectures, implementing robust cybersecurity measures, and establishing data governance protocols. Pilot programs with select healthcare institutions help validate the basic infrastructure and identify potential issues.

Phase 2: Device Integration (12-18 months)

The second phase involves integrating existing AI medical devices with cloud infrastructure. This requires developing standardized APIs, updating device firmware, and establishing reliable communication protocols. Priority is given to devices with the highest failure rates or most critical safety implications.

Phase 3: Advanced Analytics Deployment (18-24 months)

The third phase implements advanced monitoring, predictive analytics, and continuous learning capabilities. This includes deploying bias detection algorithms, performance monitoring systems, and automated quality assurance protocols. The focus is on preventing the types of failures identified in our analysis.

Phase 4: Ecosystem Expansion (24+ months)

The final phase involves expanding the cloud AI ecosystem to include additional device types, healthcare institutions, and advanced capabilities such as federated learning and cross-institutional collaboration.

Stakeholder Engagement Strategy

Successful implementation requires engagement with multiple stakeholders:

- Healthcare Institutions: Providing training, support, and clear value propositions for adopting cloud AI systems

- Regulatory Bodies: Working with FDA and international regulators to establish appropriate oversight frameworks

- Device Manufacturers: Collaborating to integrate existing devices and develop new cloud-native solutions

- Healthcare Providers: Ensuring user-friendly interfaces and workflows that enhance rather than disrupt clinical practice

- Patients: Addressing privacy concerns and demonstrating improved safety and outcomes

Regulatory and Ethical Considerations

The deployment of cloud-based AI medical systems raises significant regulatory and ethical considerations that must be carefully addressed to ensure patient safety and maintain public trust.

Regulatory Framework Challenges

Current FDA regulatory frameworks were designed for standalone medical devices and may not adequately address the dynamic nature of cloud-based AI systems. Key regulatory challenges include:

- Continuous Learning Systems: How to regulate systems that continuously update and improve their algorithms

- Multi-Institutional Data Use: Ensuring compliance with data protection regulations across different jurisdictions

- Algorithmic Transparency: Balancing the need for explainable AI with proprietary algorithm protection

- Quality Assurance: Establishing standards for continuous monitoring and performance validation

Ethical Considerations

Cloud AI medical systems must address several ethical concerns:

Privacy and Data Security

Cloud-based systems involve transmitting and storing sensitive patient data in remote servers, raising concerns about data security and patient privacy. Robust encryption, access controls, and audit trails are essential, but questions remain about data ownership and patient consent for continuous learning systems.

Algorithmic Fairness and Bias

While cloud systems offer better opportunities to detect and correct bias, they also risk perpetuating systemic biases at scale. Ensuring fair and equitable treatment across all patient populations requires careful attention to training data diversity and continuous bias monitoring.

Accountability and Liability

When AI systems make errors, determining accountability becomes complex in cloud-based systems involving multiple stakeholders. Clear frameworks for liability, responsibility, and accountability must be established before widespread deployment.

Future Outlook

The future of AI medical devices lies in intelligent, interconnected systems that learn continuously and adapt to new challenges. Cloud-based AI represents a fundamental shift toward more reliable, transparent, and effective medical AI applications.

Emerging Technologies

Several emerging technologies will enhance cloud AI medical systems:

- Quantum Computing: Enabling more sophisticated AI algorithms and faster processing of complex medical data

- Advanced Edge Computing: Reducing latency while maintaining cloud connectivity for non-critical functions

- Blockchain Technology: Providing immutable audit trails and enhanced security for medical AI decisions

- Digital Twins: Creating virtual patient models to test and validate AI algorithms before clinical deployment

Industry Transformation

The medical device industry is already beginning to embrace cloud-based AI approaches. Major manufacturers are investing in cloud infrastructure and developing new business models based on software-as-a-service (SaaS) platforms. This shift is expected to accelerate as the benefits of cloud AI become more apparent and regulatory frameworks mature.

Expected Impact on Patient Outcomes

Conservative estimates suggest that cloud-based AI could prevent 78% of the failures identified in our analysis, potentially saving hundreds of lives and preventing thousands of adverse events annually. The economic benefits include reduced liability costs, improved efficiency, and better patient outcomes.

Conclusion

The analysis of 30 critical AI medical device failures reveals systemic issues with current standalone AI implementations that have resulted in significant patient harm and economic losses. The documented cases of deaths, injuries, and false results underscore the urgent need for more robust, reliable AI medical systems.

Cloud-based AI architecture offers a comprehensive solution to address the root causes of these failures. Through centralized monitoring, continuous learning, real-time updates, and enhanced quality control, cloud AI systems can provide the reliability and safety that patients and healthcare providers require.

The implementation of cloud AI medical systems requires careful planning, stakeholder engagement, and regulatory collaboration. While challenges exist, the potential benefits in terms of patient safety, clinical outcomes, and system reliability far outweigh the implementation costs and complexity.

As the healthcare industry continues to embrace digital transformation, cloud-based AI represents the next logical step in the evolution of medical AI. The lessons learned from the failures documented in this analysis provide valuable guidance for developing safer, more effective AI medical systems that truly serve the goal of improving patient care and outcomes.

The future of medical AI lies not in isolated, static systems, but in interconnected, continuously learning networks that adapt and improve over time. Cloud AI technology provides the foundation for this transformation, offering the promise of medical AI that is not only more capable, but fundamentally more trustworthy and safe.

This analysis represents a comprehensive review of publicly available information about AI medical device failures and should serve as a foundation for ongoing research and development in cloud-based medical AI systems. Continued monitoring and analysis of emerging failures will be essential to refine and improve these proposed solutions.